The best B2B data is the kind your competitors can't buy from the same provider everyone else is using.

When companies source prospect lists from the same databases, the same contacts get hit with the same pitches from the same senders. The differentiation disappears. What separates high-performing outbound programs is proprietary data: information collected from sources others aren't mining.

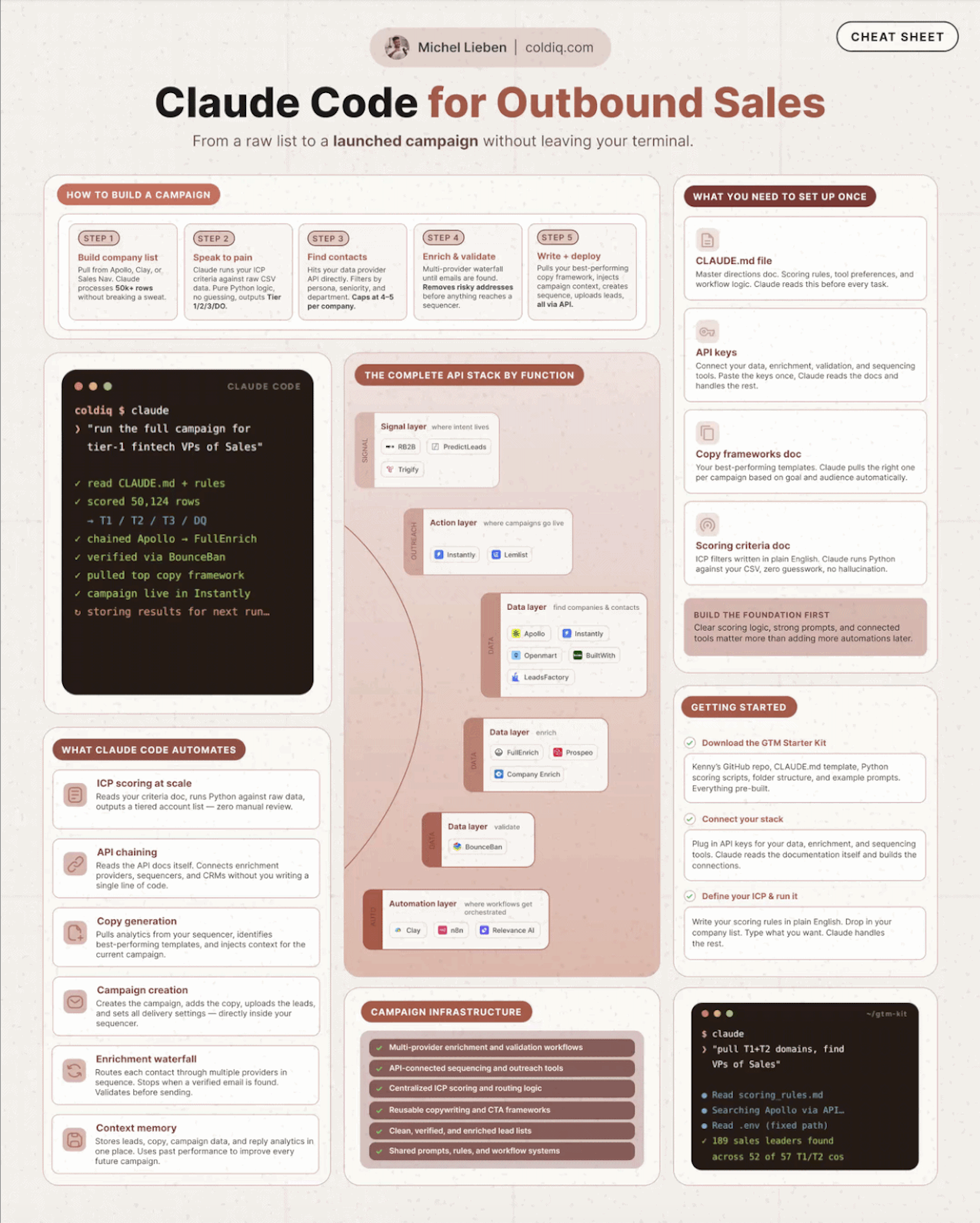

Claude Code changed the calculus for B2B teams willing to build their own data infrastructure. But knowing when to use Claude's native agent versus a dedicated scraping API makes the difference between a fast, scalable pipeline and one that hits walls immediately.

1. What Claude Code's agent can already do

Claude Code's native browsing handles a wide range of data tasks without any external API.

Point it at a company's website and it can extract pricing tiers, product focus, and team signals. Point it at a list of domains and it can return firmographic data in a spreadsheet. It automates what used to take hours of manual research.

→ Research any public company page and extract structured data

→ Compile information across multiple sources into a single dataset

→ Summarize landing pages, press releases, and case studies

→ Loop through domain lists and return consistent enrichment fields

For individual research tasks and small batches, this works well. The limitation shows at scale.

2. When dedicated APIs become necessary

Three scenarios push past what Claude's native browsing can handle.

The first is authentication. Data behind login walls, paywalls, or cookie-based sessions is invisible to Claude's agent. Review platforms, gated employee directories, and member-only content all fall into this category.

The second is scale. Crawling 10,000 company websites through Claude's browser is slow and compute-heavy. Dedicated scraping infrastructure is built for throughput, not one-off research.

The third is anti-bot protection. Sites running Cloudflare, DataDome, or similar systems will block naive scraping. Dedicated providers handle rotating proxies, browser fingerprint randomization, and detection bypass, so you don't have to build any of that yourself.

3. Individual webpage scrapers: Firecrawl and Jina AI

For extracting clean, structured content from any public URL, two tools stand out.

Firecrawl converts any webpage into clean markdown or structured JSON. It handles JavaScript-rendered sites, removes navigation and boilerplate, and returns only the content you need. This works well for extracting pricing information, product descriptions, and company overviews at scale.

Jina AI's Reader API works by prefixing any URL with r.jina.ai/ and returning readable text instantly. It's the fastest way to get clean page content inside a Claude Code script. Jina also offers an embedding API for semantic search across scraped document collections, opening up similarity-based research at scale.

Both tools integrate into Python scripts with a few lines of code. Combine them with Claude Code for extraction, cleaning, and normalization in a single pipeline.

Once you've identified the companies worth targeting from your scraping runs, the next step is finding the right decision-makers to reach. We built a free tool that maps out who works at any company.

You can find the right contacts at any company, for free:

People Finder Tool

FIND PEOPLE

Type domains, select personas, fetch real contacts.

Type LinkedIn company domain and press Enter to add

Persona 1

Define specific job titles and seniority levels to target the right decision makers

4. Search engine APIs: Exa, Linkup, and Parallel Web Systems

Standard search engine results pages are a goldmine for B2B data, but scraping Google directly violates terms of service and triggers blocks quickly. Search engine APIs solve this.

Exa is built specifically for AI agents. It's a neural search engine that returns results based on semantic meaning rather than keyword matches, making it ideal for research tasks like "find companies similar to X" or "find posts where founders mention this problem." Exa is the highest-signal option for Claude Code pipelines that need to reason about results.

Linkup provides structured search results optimized for LLM consumption. It returns clean, citation-ready content that slots directly into Claude Code research pipelines without additional parsing.

Parallel Web Systems offers high-throughput web research APIs built for teams running thousands of queries in parallel. The right choice for large-scale list enrichment campaigns where speed and volume matter.

Combining search APIs with technology data adds another layer of targeting precision. We built a free tool that surfaces what tech stack any company is running so you can filter and prioritize lists before sending.

You can see what any company's tech stack looks like, for free:

Tech Stack Finder Tool

Quick examples:

5. Pre-built niche scrapers: Apify and Rapid

For platforms with specialized infrastructure requirements, pre-built scrapers save weeks of engineering work.

Apify is the largest marketplace for pre-built web scrapers. Their actor library includes scrapers for Google Maps, LinkedIn, Instagram, Amazon, Yelp, and hundreds of other platforms. Each actor handles authentication complexity, anti-bot measures, and data structuring so you're not building any of that from scratch.

The Google Maps actor alone opens a complete local business prospecting pipeline. Scrape any geographic area and business category to return names, phone numbers, email addresses, websites, and review counts. Claude Code connects to Apify's API programmatically, can trigger any actor, and processes the returned results for qualification and outreach sequencing.

Rapid (acquired by Nokia) runs a similar API marketplace with a different catalog. Their platform aggregates third-party APIs including enrichment providers, social data scrapers, and niche data sources. When you need data from a platform with no public API, Rapid is often the fastest path to a working integration.

Social scraping and job posting data from these platforms surfaces behavioral patterns that indicate buying intent. Hiring signals, content engagement, and competitor research activity are all accessible through pre-built actors without building custom infrastructure.

Based on these data sources, we built a free tool that tracks active buying signals across public data so you can time outreach to when prospects are most likely to respond.

You can see which companies are showing buying signals right now, for free:

Intent Signals Tool

Fields marked with * are required

Quick examples:

6. Choosing the right API for your use case

The right choice depends on what data you need and where it lives.

→ Clean webpage content from individual URLs: Firecrawl or Jina AI

→ Semantic research and AI-optimized search results: Exa or Linkup

→ High-volume parallel research at scale: Parallel Web Systems

→ Google Maps, LinkedIn, Instagram, and niche platform data: Apify

→ Third-party API aggregation for platforms with no public API: Rapid

For most B2B teams building with Claude Code, Apify and Exa cover 80% of use cases. Start there, then add specialized providers as your data needs get more granular.

The teams building proprietary data pipelines from sources competitors aren't using create a compounding advantage. Bought lists get you the same prospects as everyone else. Custom pipelines get you the ones no one else has found yet.

Once you've identified target companies through your scraping infrastructure, the next step is expanding your addressable market by finding similar companies that match those same criteria.

You can find companies that match your best accounts, for free:

Lookalike Finder Tool

Fields marked with * are required

Quick examples: